On March 16, 2026, Nvidia CEO Jensen Huang unveiled DLSS 5 at the ‘GPU Technology Conference’. The internet reacted poorly, to put it lightly. Later, when Tom's Hardware pressed him about the backlash during a Q&A session the following day, Huang claimed critics are "completely wrong”, that DLSS 5 "fuses controllability of the geometry and textures and everything about the game with generative AI." He said it's "not post-processing at the frame level" but "generative control at the geometry level."

Days later, Nvidia's own Jacob Freeman, a GeForce Evangelist, answered questions from YouTuber Daniel Owen about how the technology actually works.

"Yes, DLSS 5 takes a 2D frame plus motion vectors as input… DLSS 5 only takes the rendered frame and motion vectors as inputs. Materials are inferred from the rendered frame… The underlying geometry is unchanged."

The CEO not only said one thing, but then adamantly defended it. His own staff confirmed the opposite within the week. That's the foundation of what we have to work with.

Let’s Call It What It Is

Nvidia calls DLSS 5 “Neural Rendering”, but the term is doing a lot of work to avoid a simpler one. Here's what the technology actually does. The game engine renders a frame. DLSS 5 takes that rendered 2D image and its motion vectors, feeds them into a generative AI model, and the model produces a new frame with inferred lighting, materials, and surface detail. The output replaces the original. What we should be calling this is “Generative Rendering.”

Previous DLSS iterations did something similar, but fundamentally different. Upscaling reconstructed a higher-resolution version of the frame the engine rendered. Frame generation interpolated between frames the engine rendered. Ray reconstruction filled in lighting data the engine was already computing. All of those completed work the engine started. DLSS 5 flips that relationship. The model takes a flat 2D frame and decides what it should look like at higher fidelity. The engine used to deliver the artist's vision. Now it's just providing a reference sketch for the model to generate over.

Games have used post-processing filters for decades, that isn’t new. FXAA smooths edges. Bloom simulates light bleed. Chromatic aberration mimics a camera lens. All of them modify the presentation of what the engine rendered. None of them generate new visual information that wasn't in the authored scene. What we’re looking at with DLSS 5 isn’t a filter, it’s fabrication.

I’ve Been Burned Once Before

DLSS launched in 2018 as an optional performance tool. Render at a lower resolution and let the AI reconstruct the detail. The pitch was straightforward. Better frame rates without visible quality loss, it seemed like a win for everyone. Over time, developers adapted rationally. If players are running DLSS by default, and the upscaled output looks comparable to native rendering, then native resolution stops being the optimization target. Why spend cycles rendering every pixel at 4K when the upscaler handles the gap? The development pipeline shifts. You build to the upscaled output, not the native input.

Monster Hunter Wilds is what this looks like eight years later. At native 4K ultra settings, even the RTX 4090 dips into the high 40s. Turning things down doesn't help because the settings don't meaningfully scale. The only way to hit playable frame rates is upscaling and frame generation, and the game knows it. It prompts you to enable frame generation before you've even played a single hunt. Wilds isn't an isolated case. Remnant 2's developers openly admitted the game was "designed with upscaling in mind." Alan Wake 2 also listed upscaling in every tier of its system requirements, down to 1080p. Upscaling stopped being an option. It became the baseline. I've seen this pattern before, and generative rendering looks like the next iteration.

Minimum Viable Raster

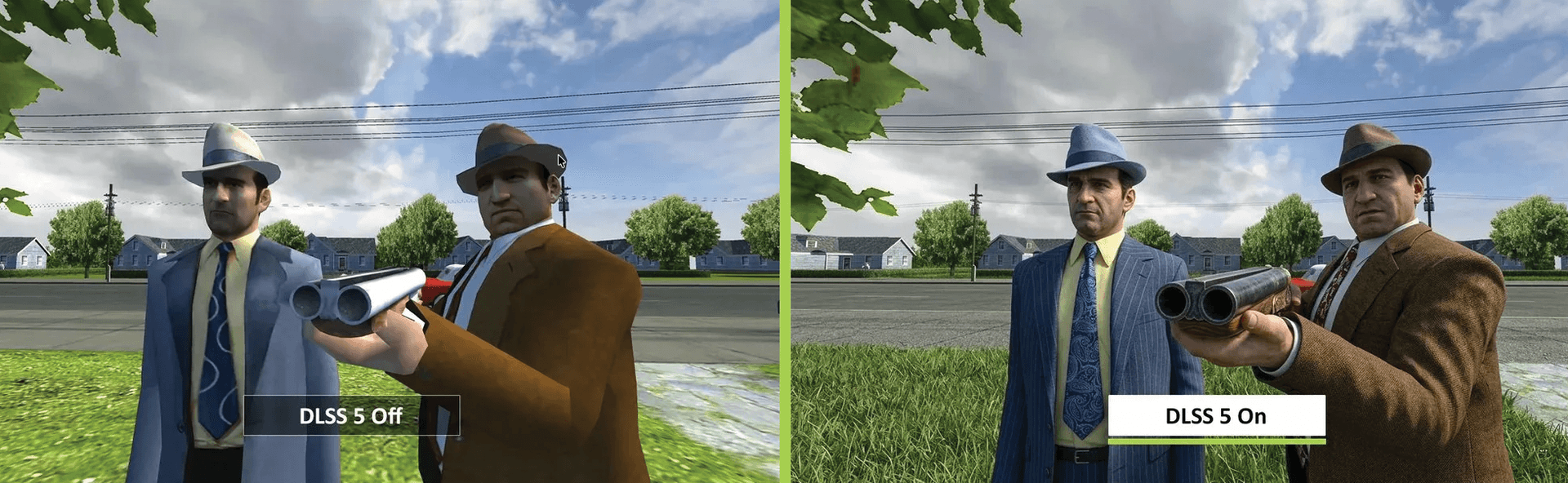

During the post-announcement chaos, someone put together a meme that indirectly made the best argument against generative rendering. They took the original Mafia, the 2002 Illusion Softworks game, and ran it through a mock DLSS 5 comparison. DLSS 5 “off” shows the game as it shipped. Low-polygon characters in suit jackets, simple geometry and flat lighting. DLSS 5 “on” shows something that could pass for a game released in the last five years. Realistic fabric texture, subsurface skin detail, and accurate material definitions on the shotgun metal.

It's a meme, but it's also the thesis.

Take a moment to look at the "off" image. Every piece of information the model needs is already there. The light source direction. The color of the clothing. The material distinction between fabric and metal. The spatial relationship between the characters. The geometry communicates enough for the model to infer the rest. The 2002 art team didn't build photorealistic PBR materials. They didn't need to. The model painted them on after the fact.

Now extend that logic into a production pipeline. If generative rendering becomes standard, the question every developer will ask is simple. How much raster fidelity do I actually need to provide for the model to generate a convincing output? If the answer is "significantly less than what we're currently building," then every hour spent on photorealistic asset work above that threshold is wasted labor. To be exceptionally clear, this is not because the artist is lazy, but because the pipeline doesn't require it.

This is what I'm calling Minimum Viable Raster, a term that is intentionally soulless and corporate. The lowest level of authored visual fidelity that still provides sufficient input data for the generative model to produce the desired output. If the model can turn 2002 geometry into something that reads as modern, then every polygon and texture map above that floor is overhead.

The counterargument writes itself. More input data produces better generative output. But the architecture itself undercuts this. The entire DLSS trajectory has pushed the input resolution lower. DLSS 4.5 already generates 23 out of every 24 pixels on screen. You're not rewarded for providing more, you're rewarded for providing enough. Upscaling already proved this. It shifted the optimization target from native resolution to 'whatever the upscaler can reconstruct,' and developers stopped building for native 4K because the technology removed the need. Generative rendering applies the same logic to visual fidelity itself. Not just resolution, but the actual authored quality of the assets, lighting, and materials. No studio has shipped a game built to this philosophy yet. But the incentive structure points here, and the industry's track record shows that when a technology enables a shortcut, studios take it. Not out of malice or being lazy, but out of rational efficiency. The real question is, why would a studio invest in visual fidelity beyond what the model needs to do its job?

What Gets Lost

Assume studios adopt minimum viable raster as a production philosophy. The model handles the fidelity gap. What happens to the people who used to do that work?

The first generation of artists who mastered rasterized rendering will still have those skills. But if the studios hiring them no longer need that level of fidelity, what incentive is there for the next generation to develop them? And that question only compounds with each subsequent generation. Why would a junior artist spend years learning to build high-fidelity assets if the pipeline only requires a minimum viable input and the generative layer handles the rest? The incentive disappears. Not because the knowledge is forbidden, and not because developers are lazy, but because nothing in the system demands it.

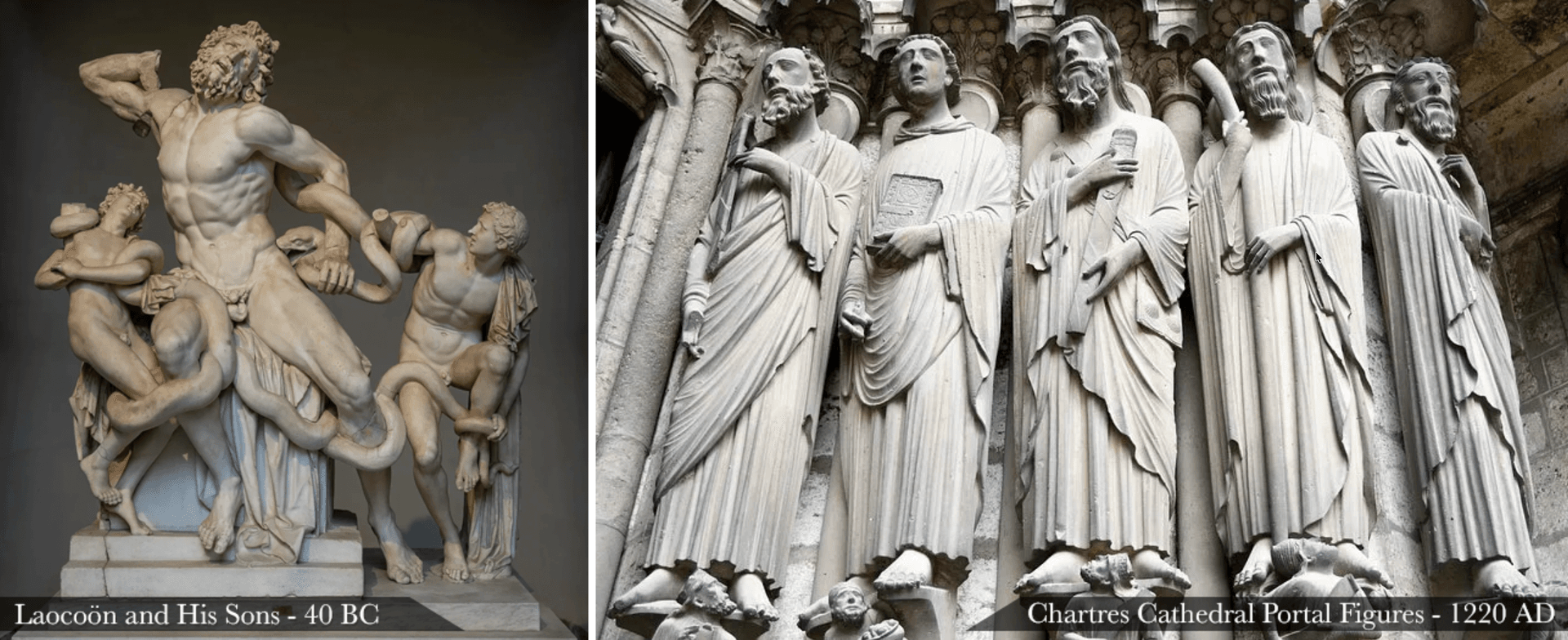

This mechanism isn't new. Classical antiquity produced art with perspective, anatomical accuracy, and naturalistic lighting. When the institutions that demanded those skills collapsed, when patronage shifted to favor symbolic communication over naturalism, the skills atrophied over generations. The skills weren't lost because they were suppressed. They were lost because no institution required them. I'm not predicting a thousand-year dark age of game art. I'm pointing at the pattern. When institutional demand for a skill set disappears, the skills follow. If generative rendering removes the demand for high-fidelity authored assets, the skill set that produces them erodes. Not because anyone decided it should, but because nothing in the pipeline requires it to survive. And once those skills are gone, the generative layer stops being an option. It becomes the only path left.

Locked In

Up until now, the standards that power real time rendering, DirectX, Vulkan, and DXR, have been vendor-agnostic. The techniques built on them, from specular and bump mapping to normal mapping, PBR, and ray tracing produced the same image regardless of who made your GPU. Your hardware determined how fast the computations happen, but it didn't drive what the output looked like.

Upscaling was the first crack in that. DLSS was Nvidia-proprietary. FSR was AMD's answer. XeSS was Intel's. They all produced slightly different outputs from the same input, but those differences were subtle. Different sharpness, different edge handling, different artifact behavior. None of them were fabricating a new image. Previous upscalers worked with what the engine gave them, generative rendering builds entirely new frames. Nvidia's model generates one output. AMD, if they build their own, generates an entirely different one based on its own training data. Any other vendor with their own proprietary model produces yet another interpretation, whether that's Intel, Sony, or anyone else. Unless the tools exist to ensure the output is identical across hardware, we're heading toward a world where different hardware shows you different games. Not at a quantitative level, but a qualitative one.

The frustrating part is that the open path is being built. Microsoft is actively developing Cooperative Vectors for DirectX, a cross-vendor standard for running neural networks directly inside the rendering pipeline. AMD, Intel, Nvidia, and Qualcomm are all involved. It's the natural extension of what DirectX and DXR have always done, giving developers vendor-agnostic tools. Nvidia shipped DLSS 5 entirely outside of that effort, through their proprietary Streamline framework, using their own model on their own hardware. The open infrastructure is being laid, but Nvidia didn't wait for it.

And that's before you account for the consumer who doesn't have access to any generative layer at all. If studios adopt minimum viable raster as their pipeline, building to a lower fidelity target with the expectation that the generative layer closes the gap, then the player without that layer doesn't get a lower settings tier. They get the reference sketch. The unfinished version. The product that was never meant to be seen on its own.

The Version Worth Wanting

I want to give this room to breathe though, because the strongest version of this counterargument is genuine. The last thing I want to do is be all doom and gloom regarding technological advancements, technology always grows and it’s standard practice to grow and adapt with it.

Rendering budgets have eaten everything else in game development for two decades. The visual fidelity arms race consumed every available cycle of compute and every available hour of artist labor. During the seventh generation, games like Crysis and Red Faction: Guerrilla delivered destructible environments, physics-driven gameplay, dynamic simulations that made the world feel alive. Over the years, all of it got sacrificed on the altar of "does this screenshot look photorealistic.”

If generative rendering genuinely reduces the raster overhead, that freed compute could go elsewhere. More detailed animation systems, destructible environments that respond to player interaction, AI-driven NPC behavior that isn't scripted, dynamic weather and ecosystems. Physics simulations that make explosions feel like Red Faction did in 2009, where you watched a structure come apart piece by piece and every fragment interacted with the scene in a realistic, albeit simulated, way.

There's a version of this technology that could get us there. One where the generative layer is a tool the artist controls, trained on the studio's own visual language, reinforcing the authored vision rather than overwriting it. That future is worth wanting.

Centralized Compute

Thing is, even in the best case, the math likely doesn't work the way the optimists think it does. Generative models don't stay small. Every iteration gets bigger, demands more compute to run. The raster overhead shrinks, but the model overhead grows to fill the gap. The net compute available for simulation, physics, AI-driven gameplay doesn't actually increase. It just shifts from one budget to another, in this case, from the raster input, to the generative output.

There’s also a ceiling on local hardware, this is the scary part. These models could very well eventually outgrow what a consumer GPU can run efficiently in real time. When that happens the inference moves to data centers. The generative layer stops running on your machine and starts running on Nvidia's servers, using Nvidia's models, on Nvidia's infrastructure. At that point you're not just locked into a proprietary model. You're locked into a proprietary cloud, assuming your network connection is even strong enough to sustain it.

If the model runs in a data center, it doesn't matter whether the player has an RTX 5090 or a five-year-old laptop. The game ships everywhere. Lower minimum specs, wider audience, more sales. The freed compute doesn't fund better gameplay. It funds broader reach. The version worth wanting doesn't arrive because selling to everyone will always win over building something deeper.

It Ends With You

None of this was introduced to us cleanly. Jensen told the public one thing. His own employee confirmed a different reality within the week. Then he went on a podcast and agreed that AI slop is bad, after telling the press that critics were "completely wrong" days earlier.

A lot of what I've laid out here is a thought experiment. Minimum viable raster, the skill atrophy, the centralized compute path, none of that has happened yet. These are projections based on what the upscaling precedent already proved and what historical patterns show happens when incentive structures shift. I could be wrong about all of it. The idealistic version of this technology, where the artist keeps control and the freed compute goes to making games play better, that future would be genuinely good. I'd welcome it.

But I gave them the benefit of the doubt once before. With upscaling, there was a level of trust. The technology was new, the intentions seemed genuine, and there was a curiosity in seeing where it would go. Over a decade, optional became mandatory, and that trust was spent. Now they're asking for it again with generative rendering, except this time they can't even keep their story straight about what it does. The way this was introduced doesn't earn the optimistic read. It earns the pattern-based one, and the patterns, currently, all point in the same direction.

Sources

- NotebookCheck (March 24, 2026). "Nvidia CEO Jensen Huang backtracks on DLSS 5 criticism after gamer backlash in podcast." NotebookCheck.

- Lex Fridman Podcast #494 (March 23, 2026). "Jensen Huang: NVIDIA - The $4 Trillion Company & the AI Revolution." Lex Fridman.

- Digital Foundry (March 22, 2026). "The Big PSSR Interview With Mark Cerny." Digital Foundry.

- Kotaku (March 21, 2026). "Nvidia CEO's Defense Of DLSS 5 Gets Contradicted By One Of His Employees." Kotaku.

- VideoCardz (March 20, 2026). "NVIDIA confirms DLSS 5 uses a 2D frame plus motion vectors as input." VideoCardz.

- Tom's Hardware (March 18, 2026). "Jensen Huang says gamers are 'completely wrong' about DLSS 5." Tom's Hardware.

- Nvidia Newsroom (March 16, 2026). "NVIDIA DLSS 5 Delivers AI-Powered Breakthrough in Visual Fidelity for Games." Nvidia.

- DSOGaming (March 2025). "Monster Hunter Wilds Benchmarks & PC Performance Analysis." DSOGaming.

- PCOptimizedSettings (March 2025). "Monster Hunter Wilds PC Optimization: Best Settings + Benchmarks." PCOptimizedSettings.

- Microsoft DirectX Developer Blog (September 16, 2025). "D3D12 Cooperative Vector." Microsoft.

- Microsoft DirectX Developer Blog (January 7, 2025). "Enabling Neural Rendering in DirectX: Cooperative Vector Support Coming Soon." Microsoft.

- TechSpot (October 21, 2023). "Alan Wake II assumes everyone will use upscaling, even at 1080p." TechSpot.

- Tom's Hardware (July 26, 2023). "Remnant II Devs Designed Game With DLSS/FSR 'Upscaling In Mind.'" Tom's Hardware.

Subscribe to 𝕾𝖍𝖎𝖓 𝕽𝖎𝖌𝖒𝖆𝖓

Get the latest posts delivered to your inbox. No spam, unsubscribe anytime.

Latest

More from the site

Shin Rigman

Freelance

Splicer Assassin

#Freelance

Read post

Shin Rigman

Personal

The Word "Indie" Doesn't Mean Anything Anymore

On December 11, 2025, Clair Obscur: Expedition 33 swept The Game Awards with a record nine wins. Game of the Year. Best Independent Game. Best Debut Indie Game. The same studio, in the same night, tr

Read post

Shin Rigman

Travel

A Few Days in Reno, Nevada

#Travel

Read post